EC2 inventory from different AWS Accounts in a CSV file

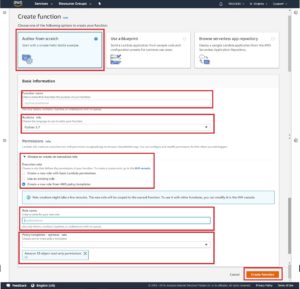

1. Create lambda function as follows

-

- Go to lambda from services menu of AWS

- Click on create function button from lambda dashboard

- Choose Author from scratch option while creating function

- Give function name & select Runtime language as python 3.7

- Assign S3 permission to read file from s3 as soon as uploaded

- Select Create a role with AWS policy template

- Define Role name

- Choose Policy Template

- Assign S3 Write permission (AmazonS3FullAccess) to store CSV file in s3 bucket.

- Now click on button Create function

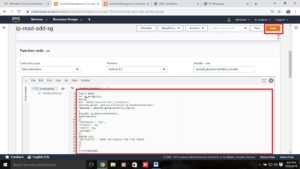

2. Next you will have to configure the lambda function

-

- Add trigger (if required).

- Copy the function Code from here

import boto3, os

from datetime import date

def lambda_handler(event, context):

ec2 = boto3.resource(‘ec2′,’us-east-1’)#account_details = [(‘<acc_id>’,'<acc_sec_id>’,'<acc_nm>’),(‘<acc_id>’,'<acc_sec_id>’,'<acc_nm>’)]

account_details = [(”,”,”),(”,”,”)] #give account credential to check resources#regions = [‘<region_code>’,'<region_code>’]

regions = [‘us-east-1′,’ap-south-1’] #give regions to check ec2 instances#tag_keys = [‘<tag_key>’,'<tag_key>’,'<tag_key>’]

tag_keys = [”,”,”] #Tagkeys you want to searchtoday=date.today()

file_name = ‘ec2_instance_report_’ +today.strftime(“%d-%m-%y”)+’.csv’s3_bucket = ” #s3 bucket to store csv file

file_location = ‘/tmp/’+file_name

with open(file_location,’w’) as file:

file.write(‘account_id,account_name,region,instance_id,instance_name,instance_type,platform,state,vpc,subnet,private_ip,’ + ‘,’.join(tag_keys) + ‘\n’)for acc_tuple in account_details:

boto_session = boto3.session.Session(aws_access_key_id=acc_tuple[0], aws_secret_access_key=acc_tuple[1])

for region in regions:

ec2 = boto_session.resource(‘ec2’,region)

instances = ec2.instances.all()

for index, instance in enumerate(instances):

tags_list = instance.tags

instance_name = ”

output_tags = {}

for tag in tags_list:

if tag[‘Key’] == ‘Name’ :

instance_name = tag[‘Value’]

elif tag[‘Key’] in tag_keys:

output_tags[tag[‘Key’]] = tag[‘Value’]client = boto3.client(“sts”, aws_access_key_id=acc_tuple[0], aws_secret_access_key=acc_tuple[1])

output = client.get_caller_identity()[‘Account’] + ‘,’ + acc_tuple[2] + ‘,’ + region + ‘,’ + instance.id + ‘,’ + instance_name + ‘,’ + instance.instance_type + ‘,’ + str(instance.platform) + ‘,’ + instance.state [‘Name’]+ ‘,’ + instance.vpc_id + ‘,’ + instance.subnet_id + ‘,’ + instance.private_ip_addressfor tag_given_key in tag_keys:

if tag_given_key in output_tags.keys():

output += ‘,’ + output_tags[tag_given_key]

else:

output += ‘,’ + ”with open(file_location,’a’) as file:

file.write(output + ‘\n’)

s3 = boto3.client(‘s3’)

s3.upload_file(file_location, s3_bucket, file_name)

3. Do some changes in code as required

-

- Proper Indent Arrangements:

- Give single indent from line 4 – 21, 24, 50 – 51

- Give Double indent to line 22, 25, 26

- Give Triple indent to line 27-29

- Give Four indent to line 30-33,39-42, 48

- Give Five indent to line 34, 36, 43, 45, 49

- Give six indent to line 35, 37, 44, 46

- Give inputs:

- Account details (at line no 7)

- Regions (at line no 10) (separated with comma in a list)

- Tag_keys (at line no 13) (tags which you want to recover)

- S3_bucket (at line no 18)

- Proper Indent Arrangements:

4.Now click on SAVE to save the function code and changes done in configuration

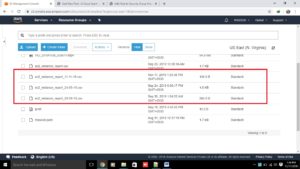

5. Now trigger your lambda function by Calling.

6. Now After execution following is the output you can see below

7. Thank you…..